> The Rise of Agentic AI: Autonomous Systems That Act Like Digital Colleagues

AI is shifting from tools you use to systems that work alongside you. This is the agentic AI era.

Artificial intelligence is entering a new phase, one where systems don’t just respond to prompts but actively pursue goals.

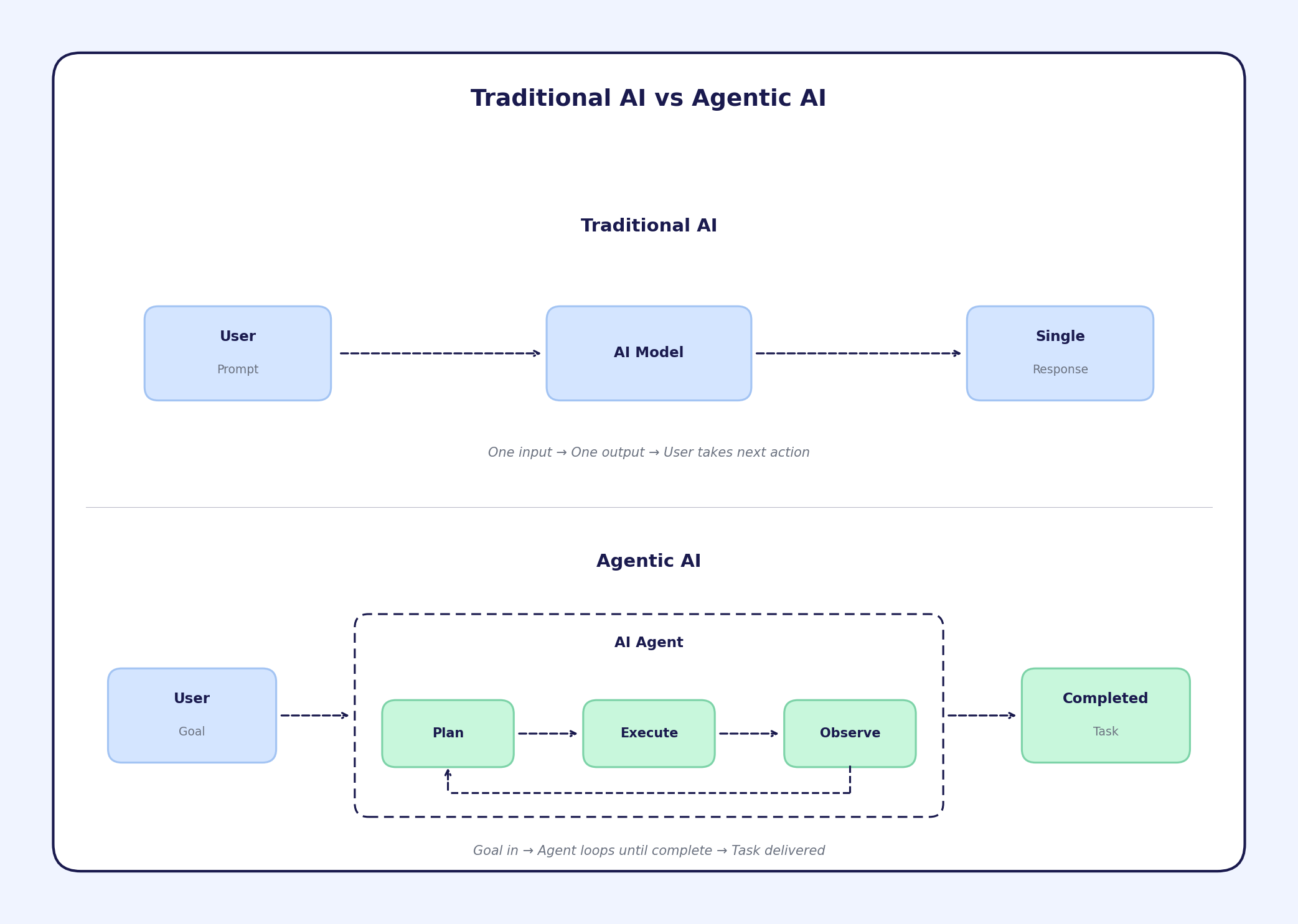

For years, interacting with AI meant typing a request and receiving a response. The model waited for instructions. It didn’t plan ahead, call tools, or adjust strategy.

But a new paradigm is emerging thats agentic AI. Agentic AI systems are capable of reasoning, planning, acting, and adapting autonomously to complete multi-step objectives.

Instead of being tools we operate, these systems behave more like digital colleagues working alongside us.

This article explores:

-

What agentic AI is.

-

How it works.

-

Where it is already practical.

-

The technical challenges that remain.

What Is Agentic AI?

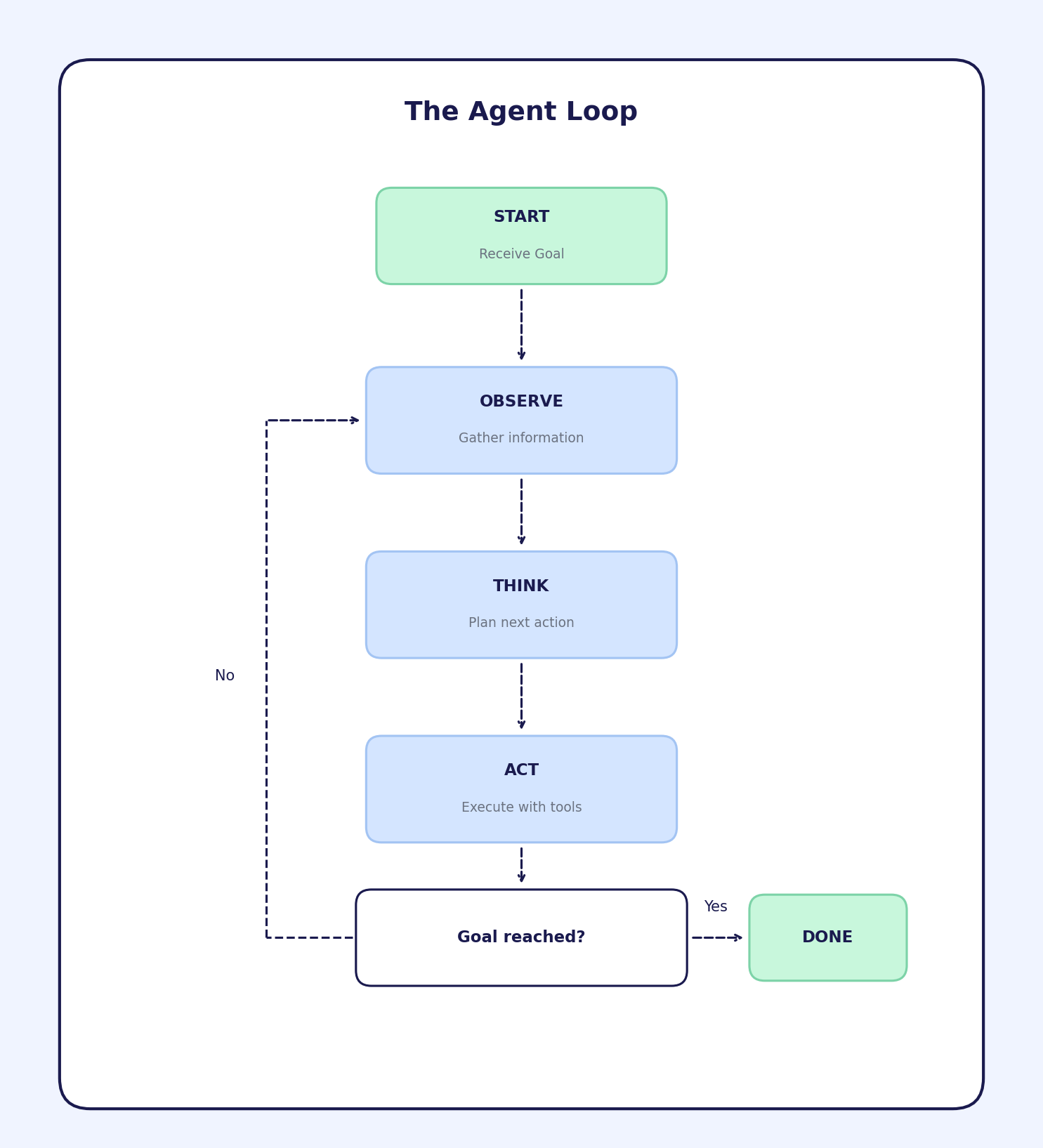

Agentic AI refers to autonomous systems that can interpret goals, plan steps, take actions across tools, evaluate results, and iterate until an objective is achieved.

Agentic system can:

- Receive a goal or objective

- Plan a sequence of actions to achieve that goal

- Execute those actions using tools (APIs, databases, browsers, code)

- Observe outcomes and adjust based on results

- Continue until the goal is complete or it determines the goal cannot be achieved

The key difference from traditional AI assistants is that agents operate in loops. They do not produce a single response. They produce a series of actions, evaluate feedback, and iterate.

Here is a concrete example.

Traditional AI assistant:

You: "Find me flights from NYC to London under $800 for next month"

AI: "Here are some options: [list of flights with prices and links]"

You: [click links, compare, book manually]

AI agent:

You: "Book me the cheapest direct flight from NYC to London for March 15-22. Use my saved payment method."

Agent:

- Searches flight aggregators

- Compares prices across dates

- Identifies cheapest direct option ($743 on British Airways)

- Navigates to booking page

- Fills in passenger details from your profile

- Completes payment

- Sends confirmation to your email

You: [receive confirmation]

The agent took a goal and executed a multi-step workflow. You did not guide each step. Its all done by the agent. In other way, its think of them as automated decision making engines so that you, the user, only have to come with your query. They operate and use a variety of tools available to them in their environment to get things done for you so that you can sit back and relax while it figures out how to solve your problem.

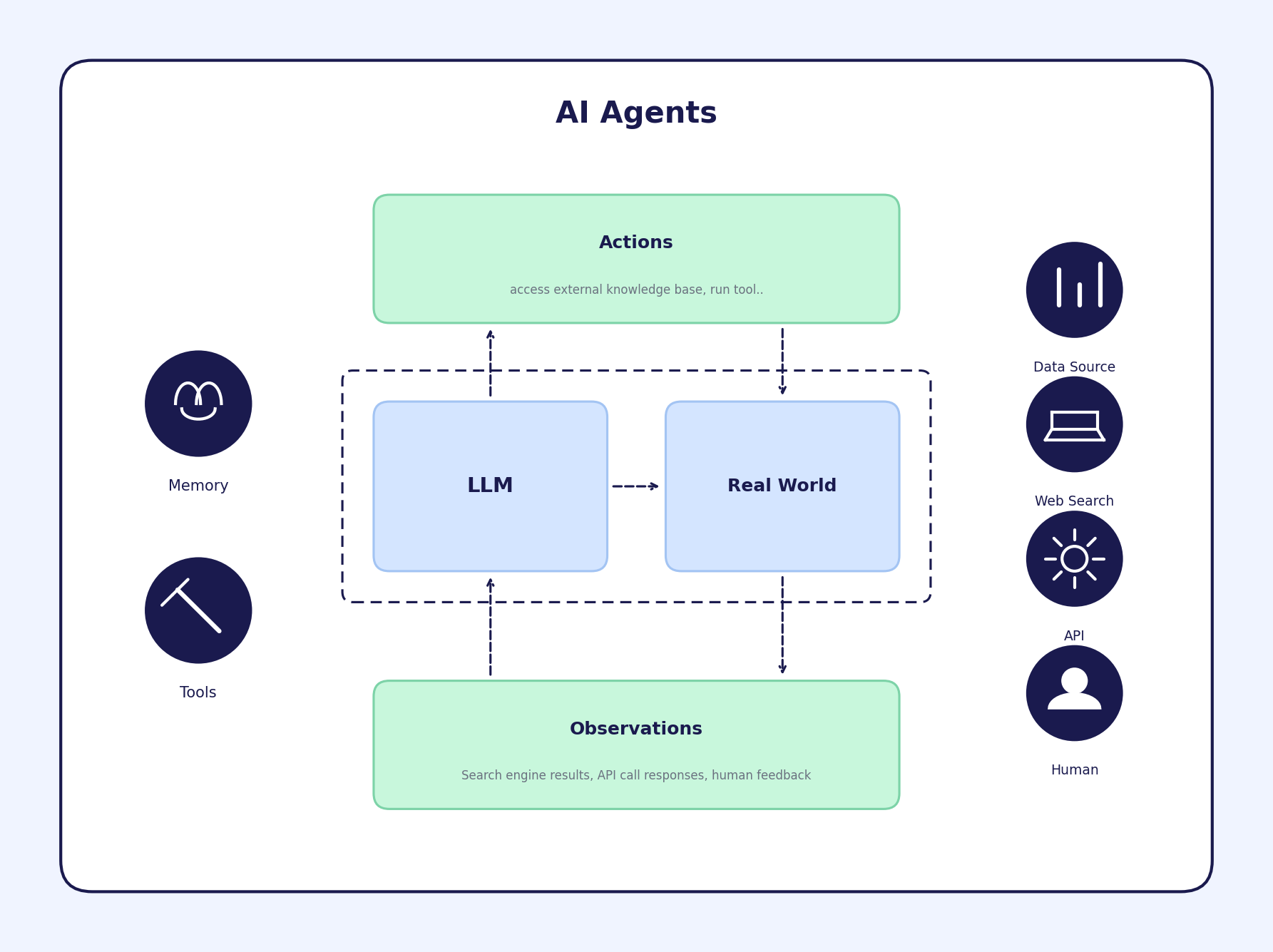

Agents autonomously direct their own processes and execution flow, choosing which tools to use based on the task at hand. These tools can include web search engines, databases, APIs, and more, enabling agents to interact with the real world.

How Agentic AI Works (Core Architecture)

Most AI agents share a common structure.

1. The Reasoning Core

This is typically a large language model (LLM) that interprets goals, plans steps, and decides what to do next. Models like GPT-4, Claude, and Gemini serve this function. The LLM acts as the "brain" that processes information and makes decisions.

2. Tools and Actions

Agents need ways to affect the world. Common tools include:

- Web browsers (navigate sites, click buttons, fill forms)

- Code execution (run Python, query databases)

- APIs (send emails, create calendar events, update CRMs)

- File systems (read documents, write reports)

An agent without tools is just a chatbot. Tools give agents the ability to act.

3. Memory

Agents need to remember what they have done, what worked, and what failed. This includes:

- Short-term memory: the current task context and recent actions

- Long-term memory: past interactions, user preferences, learned patterns

Without memory, agents repeat mistakes and cannot learn from experience.

4. The Agent Loop

Agents operate in a continuous cycle.

This continues until the task is complete, the agent gets stuck, or it determines the goal is impossible.

How Agentic AI Differs From Traditional AI

| Aspect | Traditional AI | Agentic AI |

|---|---|---|

| Interaction | Single prompt, single response | Goal in, completed task out |

| Control | Human guides each step | Agent decides steps autonomously |

| Scope | Answers questions | Executes workflows |

| Error handling | Returns error to user | Attempts recovery, tries alternatives |

| State | Stateless (each request is independent) | Stateful (remembers context across actions) |

| Tools | None or limited | Multiple integrated tools |

The shift is from AI as an oracle (ask and receive answers) to AI as a worker (assign and receive completed work).

Where Agentic AI Works Today

Marketing Operations

Marketing teams run repetitive workflows schedule posts, analyze campaign performance, generate reports, update ad copy, segment audiences. These workflows follow predictable patterns that agents handle well.

AI agents can monitor campaign metrics and pause underperforming ads automatically. They generate weekly performance reports and distribute them to stakeholders. They create variations of ad copy and test them across segments. They update content calendars based on engagement data. A human marketer might spend 4-6 hours on a monthly report. An agent completes it in minutes.

Human Resources

HR departments process applications, schedule interviews, answer employee questions, manage onboarding, and track compliance. Much of this work is procedural.

AI agents screen resumes against job requirements and rank candidates. They coordinate interview schedules across multiple calendars, checking availability for interviewers and candidates, sending invites, and updating the applicant tracking system. They answer benefits questions by searching policy documents. They generate offer letters from templates with correct compensation data. They track onboarding task completion and send reminders. Coordination that typically requires multiple emails and calendar checks becomes a single request.

Research and Analysis

Research tasks require gathering information from multiple sources, synthesizing findings, and producing structured outputs. Analysts, consultants, and knowledge workers spend significant time on these activities.

AI research agents search across databases, websites, and internal documents to collect relevant information. They read and summarize lengthy reports, papers, and articles. They compare data points across sources and identify patterns or contradictions. They compile findings into structured formats such as briefs, tables, or presentations. They track topics over time and alert users to new developments. A competitive analysis that once took a researcher two days of searching, reading, and writing can now be drafted in an hour. The agent handles the information gathering while the human focuses on interpretation and strategic decisions.

Customer Support

Support teams answer questions, troubleshoot issues, process returns, and escalate complex cases. Many support interactions follow decision trees.

AI agents can resolve common issues by accessing knowledge bases and taking actions. They process refunds and returns within policy guidelines. They escalate to human agents with full context when needed. They follow up on open tickets and request updates from customers. A damaged shipment complaint that once required a support agent to check order history, verify tracking, review policy, and process a resolution can now be handled end to end by an agent.

Technical Challenges

Agentic AI works for certain tasks. It fails at others. Understanding the limitations matters more than understanding the capabilities.

Reliability

Agents fail. They misinterpret goals. They take wrong actions. They get stuck in loops. They hallucinate information. Current failure rates for complex multi-step tasks range from 20-50% depending on the task and agent architecture. That is too high for critical workflows.The problem compounds with task length. Each step has some probability of error.

Verification

How do you know an agent did the right thing? Checking agent work requires reviewing each action and its outcome. For long workflows, this can take longer than doing the task yourself.

Current approaches:

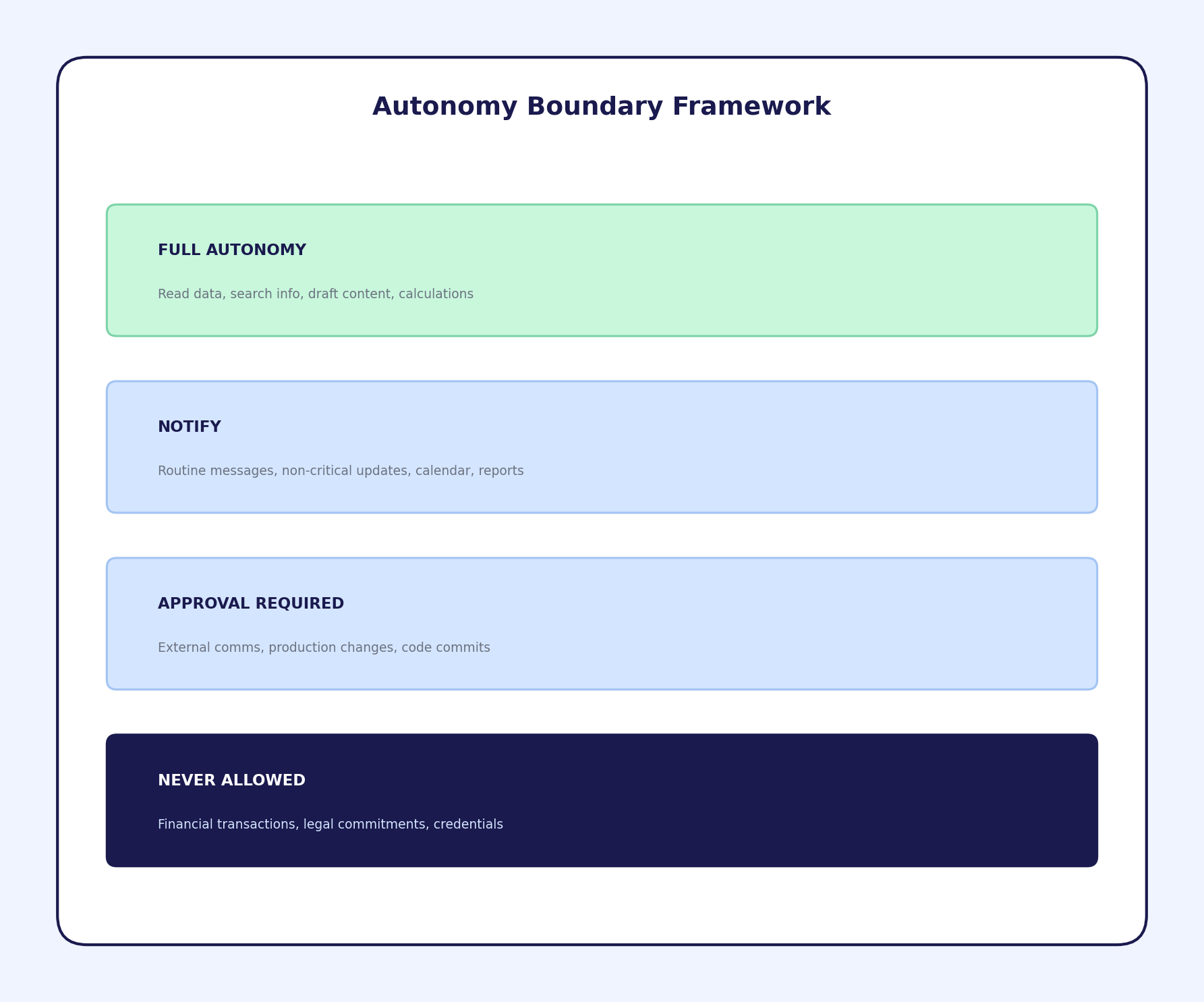

- Require human approval before high-stakes actions (sending emails, making payments)

- Log all actions for audit

- Test agents extensively before deployment

- Start with low-risk tasks and expand scope gradually

None of these fully solve the verification problem.

Security

Agents with tool access are attack vectors. Prompt injection attacks trick agents into executing unintended actions. A malicious email could instruct an email processing agent to forward sensitive data.

The security model for AI agents is still immature. Most deployments rely on manual review and limited tool access.

Context Limits

LLMs have finite context windows. Long tasks generate long histories. Eventually the agent "forgets" earlier steps or constraints.

Workarounds include:

- Summarizing history periodically

- Storing facts in external memory

- Breaking tasks into smaller sub-tasks

These help but do not eliminate the problem.

Cost

Agent tasks require many LLM calls. A single workflow might use hundreds of API calls. At current pricing, complex agent tasks can cost $1-10 per execution. High volume use cases become expensive. Costs are dropping as models become more efficient. But for now, cost limits agent deployment to high value tasks.

Ethical Considerations

Accountability

When an agent makes a mistake, who is responsible? The user who gave the goal? The company that deployed the agent? The company that built the underlying model?

Current legal frameworks do not address this clearly. Most deployments require human oversight precisely because accountability is undefined.

Job Displacement

AI agents automate tasks that humans currently perform. Some roles will change. Some will disappear. The pattern from previous automation waves suggests: routine, procedural work gets automated first. Roles requiring judgment, creativity, and interpersonal skills remain human longer. Companies deploying agents face choices about how to handle workforce transitions. There are no easy answers.

Autonomy Boundaries

How much should an agent be allowed to do without human approval?

These boundaries should be explicit, documented, and enforced technically.

Transparency

Should people know when they are interacting with an agent rather than a human? In customer support contexts, disclosure is increasingly expected or required. Agents acting on behalf of individuals raise similar questions. If an agent sends an email "from" you, should recipients know it was AI-generated?

What Comes Next

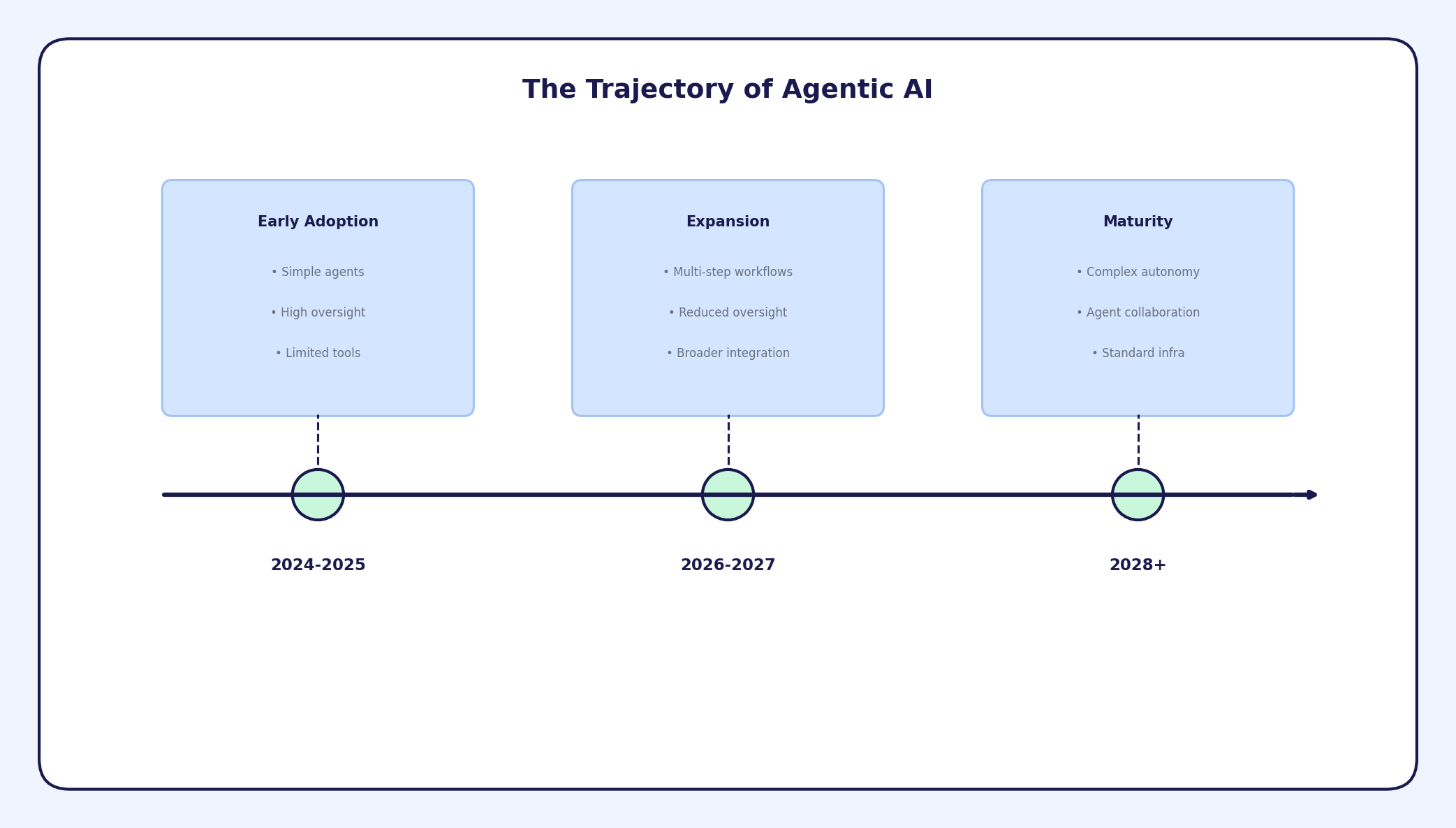

Agentic AI will improve. Reliability will increase. Costs will decrease. Tools will become more sophisticated.

For individuals, learning to work with AI agents will become a career skill. Understanding what agents can and cannot do, how to specify goals clearly, and how to verify results will matter. For organizations, decisions about where to deploy agents, how to handle accountability, and how to manage workforce changes will become strategic priorities. For builders, creating reliable, safe, useful agents is the engineering challenge of the next few years.

Summary

Agentic AI represents a shift from AI as a tool you use to AI as a worker that acts on your behalf. Agents receive goals, plan actions, execute workflows, and deliver results. The technology works today for structured, procedural tasks with clear success criteria. Marketing operations, HR processes, IT workflows, and customer support are early adoption areas.

Significant challenges remain. Reliability is not high enough for critical tasks. Verification is difficult. Security models are immature. Accountability is undefined. These are solvable problems. The trajectory is clear. The question is execution. If you have not experimented with AI agents yet, start now. Pick a simple workflow. Build a basic agent. Understand the failure modes firsthand.

The shift from prompting AI to deploying AI agents is happening. Understanding it early provides advantage.

Comments (0)

No comments yet. Be the first to comment!